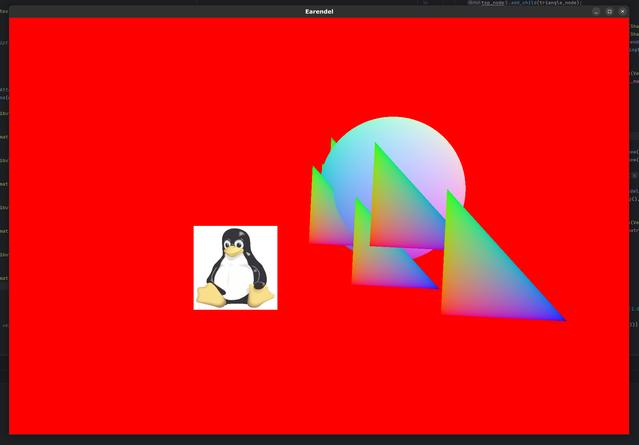

In my previous post on my game engine - Earendel, I spoke about wanting to build a basic object viewer. I've kinda, sorta managed to get there. Behold! I have a rotating teapot!

There's such a long way to go, but this marks a major step forward I think. There are few things going on in that simple, short demo. Flat-shading and model loading are the major ones but there has been a lot of work under the bonnet.

I quite like flat shading when the occasion calls for it. If a model has no textures assigned, it's not a bad way to visualise the mesh I think. The way this is performed is to have per face normals. That is to say, every triangle has a single normal that points perpendicular to the plane the triangle is in. However, the way Vulkan (and other graphics APIs) work is that normals are passed per vertex. This allows for smooth or continuous shading with smooth surfaces, but is no good for per-face.

Often, the way to get around this is to pass some per-face data along with the vertex data. This can work but I've no idea how to make that work in Vulkan. The whole thing seems centered around individual vertices. There is another option though - the geometry shader.

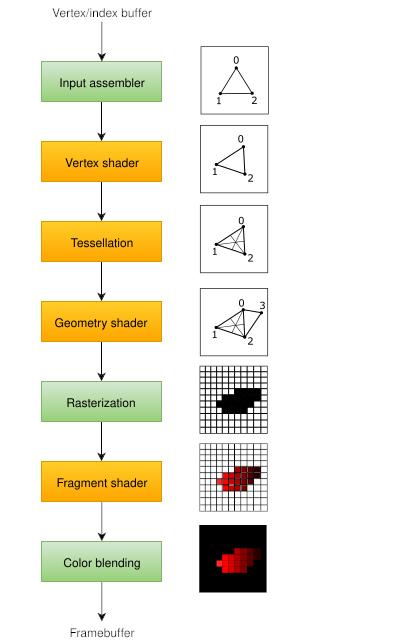

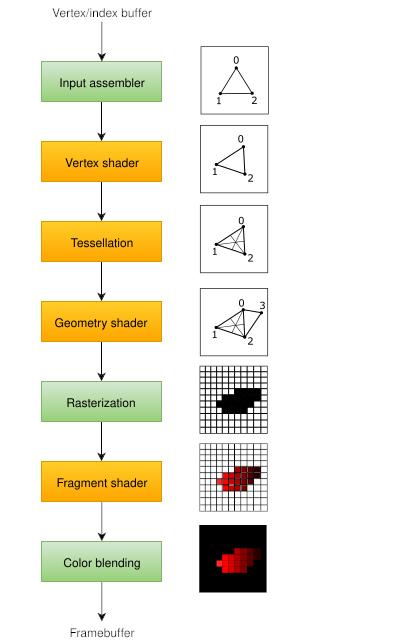

Info: Recall that a Shader is a small program written in GLSL (or HLSL) that gets compiled down and placed into a slot in our graphics pipeline. It's job is to process the bits coming in, sending the results to the next stage of the pipeline. We want a pipeline that goes from vertices and triangles to pixels on a screen.

The image above shows a basic view of the graphics pipeline. Our geometry shader sits in the middle there. It's job is to spit out some number of triangles, lines or points given the input from the tessellation stage. We have control of just what kind of simple shapes are sent onwards given some vertices and indexes. For example, if we are sent a single point in the middle of the screen, a geometry shader could take that point and spit out a triangle centred on that point, or 3 triangles, or a million. Not only that, we can send out colour or normal information. This is where we can get our per-face normals from. Every-time we get a triangle we can compute the normal for that triangle inside the shader, sending it on to the fragment shader where we do the lighting. The geometry shader looks a bit like this:

#version 450 core

layout (triangles) in; // Input: Triangle (3 vertices)

layout (triangle_strip, max_vertices = 3) out; // Output: Triangle Strip

layout(location = 0) in vec3 vertColour[]; // Coming in from face_normal.vert

layout(location = 1) in vec2 texCoord[]; // Coming in from face_normal.vert

layout(location = 0) out vec3 fragColour;

layout(location = 1) out vec3 faceNormal;

vec3 GetNormal()

{

vec3 a = vec3(gl_in[0].gl_Position) - vec3(gl_in[1].gl_Position);

vec3 b = vec3(gl_in[2].gl_Position) - vec3(gl_in[1].gl_Position);

return normalize(cross(a, b));

}

void main() {

for(int i=0; i<3; i++) {

gl_Position = gl_in[i].gl_Position; // Pass through

fragColour = vertColour[i];

faceNormal = GetNormal();

EmitVertex();

}

EndPrimitive(); // End the primitive

}

I've wanted to include geometry shaders in this engine for a while now, and this was the perfect opportunity. They are optional for the most part, but I think we can do some fun things with them I reckon.

In the previous post we talked a bit about the contract between nodes on the CPU side and the GChunks on the Vulkan/GPU side. I've expanded the contracts quite a bit. Nodes now own their contracts if they have them. Geometry now sits inside the contract by default. Every contract has a UBO of a certain size, which contains the uniform data at this point. Finally, the contract also contains zero or more textures (more on that later).

Info: Recall that a Uniform Buffer Object (or UBO) is an object passed to the Shader programs. It often contains the transformation matrix as well as any other useful variables for the shaders to process the geometry correctly.

Contracts are created between Nodes and GShards. A contract is created as soon as we see geometry in the scene-graph. If a node contains geometry then we must do some drawing (unless this node is marked as invisible). At this point we must have everything ready to send to Vulkan. We must match up our CPU side UBO with what the Shader expects. We must have the geometry in the right format, and any textures must be present. The contract contains all this information in a format Vulkan can use. All this is taken care of automatically by the Earendel engine. But what if we want to override that with our own contract?

"Ok, hold up", you might say. "What are you on about?". Well quite often, you might want most of the default stuff but what if you have a shader that needs a single extra number, like a brightness or time value. How do you send that directly to the shader from your program? You use a custom contract, in the form of a custom UBO. Earendel's default UBO contains three matrices - the camera's projection and view matrices and the geometry's transformation matrix (the model matrix as it's also called). For most of the time, this is fine, but there will be times when we want more. Indeed, when I start to add lights to Earendel we'll need a different UBO again.

This presents a problem - a problem that is a bit more difficult to solve in Rust than other languages. At its heart, a UBO is just a block of memory with a known size at compile time. It isn't going to change at runtime, but it may be created or deleted at runtime. A programmer will need to set out the UBO layout when they are writing a program and fix the size. But while the programmer knows what the UBO looks like, Earendel doesn't. Our contract needs a UBO, but a UBO could be anything.

In an object orientated language, we might use inheritance; all UBOs must inherit the master UBO class. We might consider a macro based approach if we were using C. In Rust however, I settled on the dyn Trait approach.

Info: A Trait in Rust is a bit like a Java interface or a C++ template (slightly). A trait has a name and a list of functions. Rust types can implement a trait. When they do, they must define these functions.

Lets say we have two UBOs - our basic one and a custom one that a programmer has defined. We want to use both in our program. We start by defining a UniformBufferObject trait:

pub trait UniformBufferObject {

/// Return the size in bytes of this UBO type.

fn get_ubo_size(&self) -> usize;

fn set_model_matrix(&mut self, model_matrix: Matrix4<f32>);

fn set_view_matrix(&mut self, view_matrix: Matrix4<f32>);

fn set_projection_matrix(&mut self, projection_matrix: Matrix4<f32>);

fn set_uniform(&mut self, uniform: &Uniform, position:usize);

}

Both our UBO types (basic and user) will implement this trait as follows:

impl UniformBufferObject for UBODefault {

fn get_ubo_size(&self) -> usize {

::std::mem::size_of::<Matrix4<f32>>() * 3 // The three matrices

}

fn set_model_matrix(&mut self, model_matrix: Matrix4<f32>) { self.model_matrix = model_matrix; }

fn set_view_matrix(&mut self, view_matrix: Matrix4<f32>) { self.view_matrix = view_matrix; }

fn set_projection_matrix(&mut self, projection_matrix: Matrix4<f32>) {self.projection_matrix = projection_matrix;}

fn set_uniform(&mut self, uniform: &Uniform, position: usize) {

// ignore as there are none on UBO Default

// TODO - raise an error

}

}

So far so good, but now we need to modify our contract to accept anything that implements the UniformBufferObject trait. We can do that as follows:

pub type UBORef = Arc<Mutex<dyn UniformBufferObject + Send>>;

We define a type - UBORef. This follows the Arc<Mutex<>> pattern I mentioned in the previous post. The dyn keyword in Rust is used to indicate that a trait is being used as a trait object, allowing for dynamic dispatch. This means that the specific type implementing the trait is determined at runtime, rather than at compile time. There is an overhead here, but this approach does indeed work. The + Send after the type is a little more complex; I don't fully understand it. But it is required if you are sending this dyn Trait object object between threads. There's an in-depth tutorial here.

Info: Recall that an Arc is a thread safe reference counted smart pointer. The Mutex allows us to modify the memory contained within. This combination allows for safe passing and modifying of data between threads.

It took me a long while to figure out this approach and get it to work. I'm still wondering if this is the best approach? All the UBO definitions are known at compile time - we just need to pick the right one when we traverse the nodes. I feel like an enum might get around this, if it's possible for a user to add their own UBO type to a master enum held inside Earendel. Something like that? Anyway, for now it works well enough for my purposes. I'll come back to it eventually I expect.

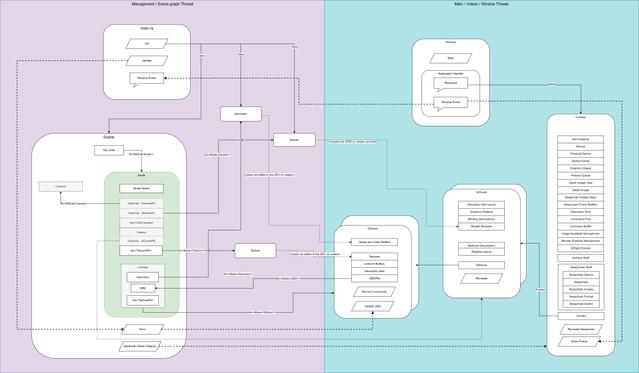

I've changed around some of the architecture to accommodate the aforementioned additions. The biggest change is around the contract, UBO and node.

Contracts are held by the nodes themselves now. As we march through the scene-graph, contracts are created automatically by Earendel when we need to do some drawing. If a developer wants to use a custom contract, they can make their own contract and their own UBO to a node and Earendel will use these instead. GShards now have a pointer to the UBO they need, rather than the scene-graph node. This means GShards only see what they need to see.

One subtle link on that diagram is the optional pointer from a node to a GChunk. Now why is this here? Well, as we go through the tree we need to know which node triggered the creation of a GChunk. A node might not trigger a draw (and therefore a GShard) but if it has a shader, we should create a GChunk ready for geometry to appear.

Cameras are now held via a pointer, instead of being owned directly by the nodes. Any geometry is now always held on the contract in the node and not by the node directly. This guarantees that if a node has geometry it will always have a contract, which is the behaviour we want.

Textures are one of the major elements of any 3D engine. The process for using textures in Vulkan is somewhat complex. In a nutshell, we need to get a block of memory and put some pixels in it, then we need to create a Vulkan sampler, which will look at this memory and treat it as a texture. The Vulkan Tutorial has an excellent description of how to create and use textures in your application.

What does treating as a texture mean? In a word - interpolation. Lets say we have two triangles forming a quad - just like in the video above - and a 256 by 256 image as our texture. How do we say which bit of the quad corresponds to which bit of the texture? Typically, we have a texture coordinate on each vertex. In this case, the bottom left vertex would have two floats - (0,0). The top right would have texture coordinates (1.0, 1.0) and so on. When it comes to the fragment shader, each pixel to be drawn will have an associated texture coordinate. If the fragment is in the middle of the quad we would expect the fragment to have (0.5, 0.5) as its coordinate. Vulkan would then convert the (0.5, 0.5) to the pixel at (128, 128) in our image then sample the texture pixel at that point. This would be a linear sampling on a 2D texture, using normalised coordinates.

There's a lot more to texture sampling though. We can have 3D textures if we so wish. What happens if we sample outside of the range (e.g (-0.5, 1.2)) - do we use a border or just repeat the texture? These are questions for the sampler.

For now, I'm keeping it as simple as can. We have linear sampling and 2D textures. We need to create a new vertex type - one with an extra 2 floats to hold the texture coordinate. I'm using the image crate to load the images and get the raw pixel colours out from jpegs, pngs or similar.

The architecture needs a bit of a change, as does the scenegraph traversal. A node can have many textures, so I use a vector of smart-pointers to a texture object. Fine for now. However, textures will need to be part of the contract when a GShard gets drawn. As Earendal traverses the scene-graph it holds onto the latest texture it finds. At drawing time, when a contract is created, the currently held texture is added to the contract. This means that one node can hold the texture, but many child nodes can share that texture if required. One example of this might be if we are drawing many 3D trees. Each might be subtly different in their geometry but they share the same texture.

When adding texture support, we need to think carefully about Vulkan's Descriptors and Descriptor Sets. I've not mentioned them before, largely because I'm still not sure how best to handle them. Suffice it to say, we need more of them now that we have textures. We need to describe what a texture resource looks like to our shaders. Up until now, we've had one descriptor set for our basic engine - just a UBO and some basic geometry. Now, however, we have a second descriptor set that includes one or more textures. We need some code to decide which one to use, based on our scene-graph traversal. The code itself is somewhat boring, but it's important plumbing we need to just get on with.

Oh yes, I added some depth buffering since the last update! This fixed a glitch I noticed on the sphere model I was using. Depth buffering is a way of figuring out which things are in-front of or behind other things. To do this we record the depth of every fragment (a potential pixel) in a buffer. If another fragment appears at the same location, we keep the one closest to us - the one with the lower depth number. This means we need a Vulkan image memory buffer the same size as our swap-chain (or draw buffer).

The Vulkan Tutorial covers depth buffering pretty well. In a nutshell, we first query our Vulkan device for the kinds of depth buffer memory we have available to us. Once we have that, we create a new block of memory and an ImageView of that memory. We'll need to alter our Vulkan Graphics Pipeline in order to make use of this depth buffer. It took me a while to get all this in place - there are some synchronisation issues to contend with. Nevertheless, depth buffering is on by default. That's fine for now, but there will be times when we want to turn it off. I'll save that question for the future.

At this point, things are beginning to get exciting. There is almost enough here to make a basic game. Certain things need tidying up, chief of which is error handling. Manipulating the scene-graph is still a little brittle and we still need some way of working with multiple scenes. But it's close. My next goal is to create a basic model loading program. To do that, I'll need to bring in Dear Lmgui - a lovely little GUI library. This is trickier than you might think, as I need to use my existing scene graph approach - nodes and all - to represent the gui components. That might take a while, but I think it'll be worth it.

There's still so much to do, but very soon I can start on the fun stuff. Rather than dealing with Vulkan or Rust, I can start to work on graphics. Things like lighting, shadows, shader effects - all that stuff. I could make a game at this point - it'd be super simple but I'd mostly be working on the game logic as the graphics side is (almost) usable. A little tidy, a little work on the scene management and we'll have a tool.